I recently completed an installation at Farnborough in Hampshire, where I got to do something I’ve wanted to do for quite a while: draw a rainbow plane. (More images of the installation are available on Flickr.)

Farnborough is the home of British aviation, site of the first powered flight on British soil (by the American showman William Cody) in 1908, as well as the British Army Balloon School, the formation of the RAF, the Royal Aircraft Establishment, and the research facilities which produced the jet engine, carbon fibre and more. Much of the site of the RAE has now been cleared, but, thanks in large part to the efforts of the Farnborough Air Sciences Trust, several buildings have been saved, including the extraordinary wind tunnels and the magnificent, reconstructed airship shed, under which the installation is sited.

Like the Drone Shadows, the Rainbow Plane is a 1:1 outline of an aircraft – in this case, the Miles M.52, an experimental jet plane developed at Farnborough in the 1940s. The M.52 never flew, but several of its innovations, including the all-moving tailplane and the biconvex “Gilette” wing, were crucial to the success of the American effort to break the sound barrier with the Bell X-1.

The M.52 is shown here as if distorted by the characteristic pansharpening effect of satellite photography – as if viewed, in flight, from space. I’ve been fascinated by the “rainbow plane” effect visible in satellite maps for some time, and have collected many examples.

I’d seen many of these but didn’t really understand what I was looking at, until I started to process the imagery myself. After installing the Washington DC drone shadow, I purchased commercial satellite imagery of the city, in order to try to see my drone from space.

The image which I purchased came from Digital Globe’s WorldView-2, a 6000lb commercial observation satellite fired into space aboard a Delta II rocket from Vandenberg Air Force Base, California, in October 2009. Digital Globe was originally founded in 1992, ahead of the 1992 Land Remote Sensing Policy Act which permitted private companies to enter the satellite imaging business. It received its initial funding from Silicon Valley, and corporations in the US, Europe and Japan. Much of Google Maps imagery is purchased from Digital Globe.

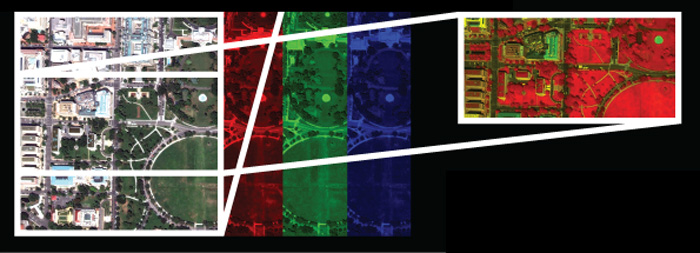

The image shows 25 square kilometres of Washington, D.C., photographed on the 26th August, 2013 – but it’s not a photograph. Observation satellites do not carry conventional cameras, but multispectral scanners which contain an array of sensors for recording data across a range of frequencies. WorldView-2’s scanner contains 8 sensors with a resolution of 1.85m per pixel: four in the visible spectrum, recording red, yellow, green, and blue, and four more, in the deep blue, the red edge, and in the inner and outer near-infrared, covering a total spectrum of more than twice the visual range of the human eye. One more sensor measures panchromatic intensity across the visible spectrum, allowing images to be sharpened to a resolution of 0.46m per pixel.

In order to make this image, it is necessary to combine data from different sensors, so a 5-3-2 image, in this case, composites data from the visible red, green and blue sensors into a single, “true colour” image (although there is nothing ‘true’ about this). This image is then used to add colour to the higher resolution but black-and-white panchromatic image, a process called “panchromatic sharpening.”

This is the process which produces the rainbow planes, which move fast enough to blur themselves across the satellites’ different chromatic sensors. It’s a glitch, but like all good glitches the rainbow plane is also a key to uncovering the functioning of the image-making machines, a glimpse into the way the machines see the world.

Comments are closed. Feel free to email if you have something to say, or leave a trackback from your own site.